Over the past few days, a study from MIT gained traction across social media. Dramatic interpretations quickly followed: that using ChatGPT was “shutting down” parts of the brain, that we’re losing our ability to think, that we’re becoming avatars on autopilot.

Posts shared colorful images and catchy headlines — often without explaining what the study actually investigated. And here, I’ll be honest: I didn’t read the full paper. Just like, most likely, the majority of people who posted definitive opinions on it.

But I did look into reliable scientific communication sources, with critical and informed readings. What I found confirmed my initial impression: the study is relevant, but says nothing close to “AI is melting your brain”.

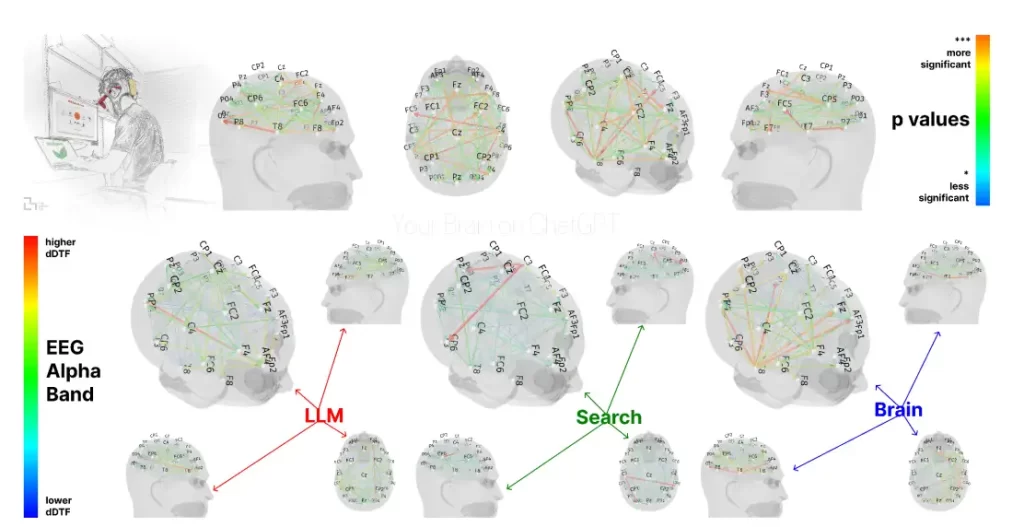

LLM, Search Engine, Brain-only, including p-values to show significance from moderately

significant (*) to highly significant (***). Source.

What the study actually investigated

Researchers from MIT Media Lab analyzed how different types of support (no tools, traditional search engines, or ChatGPT) influence cognitive behavior during essay-writing tasks.

They used EEG to measure brain activity and also assessed memory, sense of authorship, and output quality. Their findings showed that:

- Participants using only their own reasoning exhibited greater activation in brain regions related to analytical effort and working memory.

- Those who used ChatGPT showed less neural engagement during the task and recalled less of what they had written afterward.

- The study raises the hypothesis that long-term use of AI assistants may lead to a kind of “cognitive debt”: a reduction in mental engagement during tasks that typically demand more effort.

There’s no claim of brain damage or irreversible loss of cognitive abilities. What’s observed is how the brain adapts when we automate complex tasks — something that already happens with calculators, GPS, or even text editors.

Automation ≠ dumbing down

The idea that using AI makes us less intelligent depends entirely on how and why we use these tools. Automating part of a task doesn’t mean losing the ability to do it — it means we’re shifting effort elsewhere.

The risk lies in uncritical and constant use, where the tool stops being an aid and becomes a substitute. And that applies to any technology, not just generative AI.

We still need more studies, across more diverse tasks and longer timeframes, to fully understand the real effects of this kind of use. But the MIT study, despite how it’s been interpreted by many, is actually a balanced and well-grounded starting point for reflecting on the limits and benefits of cognitive automation.

And what does that have to do with synthetic data?

At SynthVision, our relationship with AI is quite different from what’s discussed in the study. We don’t use AI to make decisions or to randomly generate data.

Our focus is control. Through carefully parametrized 3D simulations, we generate highly realistic and precisely annotated synthetic data, providing a trustworthy foundation for training computer vision models.

The image generation and annotations are automated — efficiently, at scale, and often with more consistency than manual processes. But what’s not automated are the decisions about what gets generated: what variables will change, in what conditions, for what goal.

In other words, we don’t hand off the task to a model and walk away — we intentionally structure the data that AI models will use to learn, with technical judgment and human input at every step.

Conclusion

The MIT study is not an apocalyptic warning, nor a condemnation of AI use. It’s a thoughtful, methodical contribution that reinforces something we already know: tools shape how we think — and that deserves attention.

There’s nothing wrong with using AI assistants. The problem begins when we use them uncritically.

AI is still what it’s always been: a tool. And like any powerful tool, its value lies in how we use it.

Are you automating without thinking — or using it with purpose and awareness?

At SynthVision, we believe that meaningful AI starts at the base: the data.

And that’s why we build that base with control, intention, and technical precision.

If you need scalable, high-quality, realistic data to train your models —

start with the right simulation. Talk to us.