When it comes to computer vision and synthetic data, we often see closed-off research — applied to proprietary contexts and far from public access. That’s why the recent DAViD project (Data-efficient and Accurate Vision models from synthetic Data), presented by Microsoft at ICCV 2025, stands out: it was fully released to the public, including code, dataset, and models.

The project highlights something we at SynthVision know very well: high-quality synthetic data can train complete, efficient, and accurate vision models — without relying on real images.

What is DAViD?

DAViD is a multitask computer vision system based on transformer architecture, trained entirely on synthetic data. The name is a nod both to Michelangelo’s sculpture — symbolizing anatomical precision — and to the classic idea of David versus Goliath, with a small model tackling massive challenges.

Microsoft developed DAViD using a proprietary synthetic dataset called SynthHuman, composed of approximately 300,000 highly realistic images of human heads, upper bodies, and full bodies.

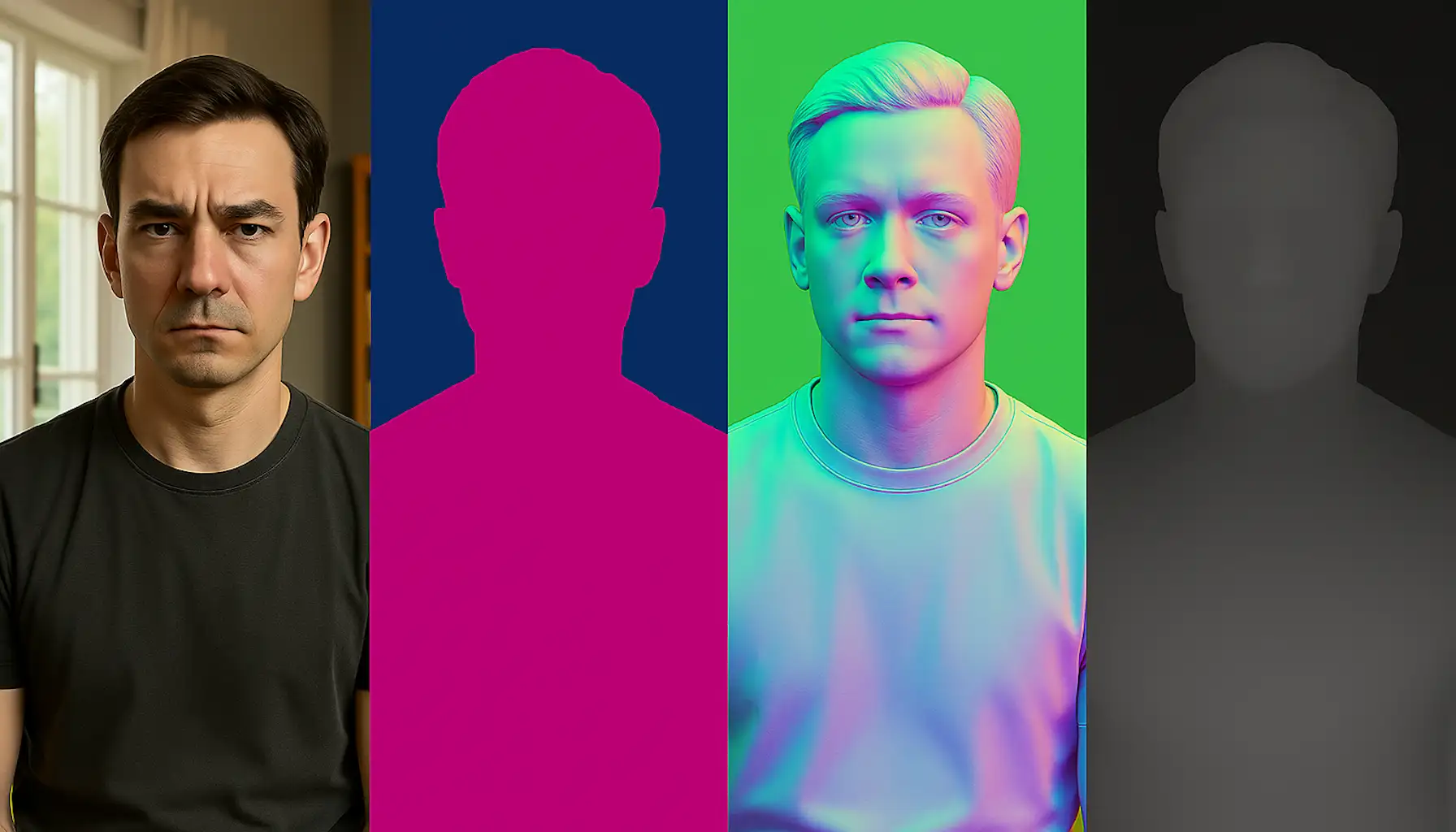

These images come richly annotated with:

- Alpha mask (foreground segmentation)

- Depth map

- Surface normals

- Camera parameters

And all of this comes at zero manual annotation cost — something only possible in synthetic environments.

A compact, multitask, and efficient model

The DAViD model is based on the Dense Prediction Transformer (DPT) architecture and was trained to simultaneously predict:

- Foreground mask

- Depth

- Surface normals

In other words, from a single image, the model can perform multiple visual tasks in a coherent and coordinated way. This not only reduces the need to train and maintain separate models, but also enables faster inference — just 21 ms per frame on an A100 GPU.

Most importantly: all this performance was achieved using only synthetic data. No real images were used during training.

Real-world results — with zero real supervision

Perhaps the most impressive aspect of DAViD is its performance on real images, despite being trained exclusively on synthetic ones. Microsoft evaluated the model on two real-world datasets — Goliath and Hi4D — and the results were outstanding.

The paper also presents several comparison tables showing DAViD’s performance against models trained on real datasets like THuman2.0 and RenderPeople. In tasks such as depth estimation, surface normals, and soft segmentation, DAViD matches or surpasses state-of-the-art models — while using a more compact architecture and achieving faster inference.

This offers public, verifiable evidence that high-quality synthetic data can serve as the sole training source for models performing impressively on real-world inputs.

The power of synthetic data — when well applied

For those of us who have worked with synthetic data for years, DAViD is a public example of what we’ve experienced in practice: when you control every aspect of the scene — camera, lighting, texture, geometry, depth, noise — you can build datasets that are incredibly rich, balanced, and accurately labeled.

The result is a more direct training process, free from hidden biases, with surprising generalization power. This is particularly valuable for tasks such as:

- Precise segmentation

- Depth estimation

- 3D detection or reconstruction

- Training simulated robots or agents

Capturing this level of annotation in the real world would be expensive, slow, and often impractical.

Big Tech is showing the way

DAViD is not alone. More and more tech giants are publishing research built on intensive use of synthetic data:

- Meta used synthetic images to train SAM-2, its next-generation instance segmentation model.

- Grok, from X (formerly Twitter), was trained using massive volumes of synthetic data for language tasks.

- And now Microsoft, with DAViD, presents a fully open study focused on computer vision.

It’s important to note: the fact that these companies are publishing this kind of work now doesn’t mean they’re just starting to explore synthetic data — it means they’ve been using it deeply for years, and are only now making a small portion of it public.

We’re on the right track

At SynthVision, we develop high-fidelity synthetic datasets with full control over scene content — including lighting, material, geometry, and sensors (RGB cameras, depth, hyperspectral), as well as all parameters involved in the rendering pipeline.

Our focus is on helping companies accelerate experimentation, enable complex tasks with little real data, and deliver robust models already at the POC stage.

While many of our best results remain in private projects, public works like DAViD show that the path of synthetic data is more solid than ever — and those who master it now are in a strong position to lead the next wave of computer vision breakthroughs.

Want to see how synthetic data can accelerate your project? At SynthVision, we build custom synthetic datasets ready to plug into real-world pipelines — with photorealistic quality, precise annotations, and fast turnaround.